Content warning: panopticism

Artificial Intelligence is an amazing technology that is currently being used to make the most oppressive tool ever conceived by man. This is not an exaggeration. The internet is a vast and interconnected place that, in theory, holds information about every single person that uses it at all times. The problem to data analysts is that there is so much information that it is impossible for human researchers to analyze more than a small patch of it in a reasonable time frame. AI solves this problem by creating an electronic eyeball capable of not only scraping massive volumes of data from the web, but also analyzing and making inferences about this data in a fraction of the time it would take a human to do the same. Do you see how such a tool could be used for evil yet?

An electronic eyeball set to automatically monitor the social media feeds of every individual in a population could, in theory, be used to hunt dissidents and criminals simply by making inferences based on their browsing habits online. In theory, if it were illegal to be gay, then an AI tool could probably figure out that someone is gay based on their free use of the internet and generate a report in human-legible English for the proper authorities. This theoretical AI tool could also figure out who is sympathetic to gay causes and create a map of the gay community online for ease of monitoring and infiltration by law enforcement. Guess what? There is nothing theoretical about this technology anymore. Not only can AI aggregate and analyze human speech online, it is actively being used right now to monitor civilian communications and make reports to law enforcement in Israel and the United States.

The war with Hamas gave Israel and the United States the perfect opportunity to sharpen nascent surveillance technologies into full-blown AI-driven tools of war and oppression. One such tool is a classified IDF project called Habsora, which translates in English to “The Gospel.” The Gospel is a targeting system that is capable of automatically analyzing a battlefield (the city of Gaza) and any other communications data you feed it in order to select targets for military personnel to act upon and destroy. Having a computer run constant analysis of a combat zone frees up a lot of human resources for more executive tasks. The Gospel was allegedly first used during the 11 days war with Hamas in 2021 to great success. According to Aviv Kochavi, head of the IDF in 2019, this AI infrastructure has allowed Israel to find 100 targets a day: “To put that into perspective, in the past we would produce 50 targets in Gaza per year. Now, this machine produces 100 targets a single day, with 50% of them being attacked.”

A human being signs off on the final strikes, of course, but the IDF allegedly encourages “quantity over quality” in its operations. The devastation in Gaza speaks to that, which makes me wonder why an artificial intelligence apparatus is needed at all when almost every single building on a block will eventually be razed anyway.

Automated striking capability is only the tip of the iceberg. The apple of Israel’s eye is the perfection of a civilian-oriented surveillance technology capable of policing an entire population automatically. Such a project, which I will elucidate shortly, reminds me of one of the concepts I researched back in college called the Panopticon. Theorized by Jeremy Bentham back in the 18th century, a panopticon would be a new type of prison that would need far fewer guards to run than a standard jail. It was circular in design with all prison cells facing inward toward the center of the structure. At this central point would a guard tower with one-way glass windows on all sides so that every single cell could be observed from a central point.

I’ll let Wikipedia explain the design: “Although it is physically impossible for the single guard to observe all the inmates’ cells at once, the fact that the inmates cannot know when they are being watched motivates them to act as though they are all being watched at all times. They are effectively compelled to self-regulation.” A prisoner cannot see into the central tower, so he cannot know if he is being actively observed. From his perspective, he is always being surveilled. A philosopher named Michel Foucault centuries later realized that this system of internalized surveillance applied to many aspects of modern life. He coined the term “panopticism” to describe it. Panopticism observes that human beings can be controlled simply by the threat of being observed. It is an unseen eye that nonetheless threatens to peer into our very hearts without notice. As an interesting aside, Foucault is one of the writers targeted by Republicans as “woke” and unfit to be taught in schools.

The invention of the security camera is a technological actualization of the panopticon. With cameras, every single room in a building can actually be observed without having an army of security guards on-location. As with the panopticon, however, the camera is only a one-way mirror. Without the man-hours to go through potentially thousands of hours of recorded footage, the ability for a camera to actively police people is limited and reactionary. Artificial intelligence bridges that gap. AI is advanced enough now to go through as many hours of recordings as one could possibly feed it and communicate in English about what it has observed. The same can be applied to sound recordings, images, social media posts… AI has the capacity to make good on the promise of the panopticon. Every single target is being observed and analyzed at the same time, with actual threats being brought to the attention of central command via push notification.

The branch of the Israeli military responsible for creating its surveillance AI is called Unit 8200. According to Guardian reporting on the matter, this unit has successfully created an artificial intelligence that goes through all the social media posts and communications by Palestinians in the West Bank in order to find and report anti-occupation sentiment. This, combined with a checkpoint system that is constantly photographing the face of every Palestinian as they move through Israeli occupied territory, creates a powerful system of categorization actively writing dossier on every single living breathing human in occupied territory using the internet. Palestinians are not full citizens of Israel despite being subject to this surveillance. Even full blown citizens of Israel are subject to this surveillance, as in the case of Rita Murad who was jailed for simply sharing an Instagram post that authorities deemed pro-Hamas.

Unit 8200 needed to gather as many voice recordings and informal written communications as possible in order to create its AI. An artificial intelligence is, put simply, a robot trained to speak by reading text over and over again until it is able to produce a human-legible result. So, Israelis needed a ton of text in order to train an effective AI tool, and they needed to spy in order to do it. According to the Guardian reporting:

”’However, when the IDF mobilised hundreds of thousands of reservists in response to the Hamas-led 7 October attacks, a group of officers with expertise in building LLMs returned to the unit from the private sector. Some came from major US tech companies, such as Google, Meta and Microsoft. (Google said the work its employees do as reservists was “not connected” to the company. Meta and Microsoft declined to comment.)

The small team of experts soon began building an LLM that understands Arabic, sources said, but effectively had to start from scratch after finding that existing commercial and open-source Arabic-language models were trained using standard written Arabic – used in formal communications, literature and media – rather than spoken Arabic.

“There are no transcripts of calls or WhatsApp conversations on the internet. It doesn’t exist in the quantity needed to train such a model,” one source said. The challenge, they added, was to “collect all the [spoken Arabic] text the unit has ever had and put it into a centralised place”. They said the model’s training data eventually consisted of approximately 100bn words.”’

In a terrifying perversion of the academic process, Israelis actually went in and gathered a ton of primary sources on casual Arabic. They did this not to understand the Palestinians better, but to build a robot that would be able to read their once-private communications for the benefit of police work. The tool, now implemented, will be able to detect subversive individuals simply by the posts they share online. If plugged into the right outlets, posts aren’t needed. The AI could use data from the webpages about what Palestinians are simply looking at. Did you know that? With Java Script, a developer can know exactly how long you look at an image online, how long you tarry over a specific paragraph. The internet enables a shockingly deep psychoanalysis of individuals that can be analyzed at scale with AI. Israel does not have a constitution, and there are no laws in its books that guarantee a right to freedom of speech or a right to privacy. So, within Israel’s legal framework, spying is fair game, and prosecuting crimes of thought is more than acceptable in its war on terrorism.

The USA has backed Israel’s efforts to oppress the Palestinians since day 1. Now, the new Republican administration is looking to utilize a similar artificial intelligence surveillance infrastructure to prosecute people in America for engaging with criminalized ideas online. Under a plan that Marco Rubio calls “Catch and Revoke,” the social media posts of international students on American university campuses are being analyzed by AI to determine Hamas sympathies. If deemed to be harboring disagreeable ideologies, these students’ visas are revoked. Since they are not full citizens of the USA, the free speech rights of these international students is apparently fair game for the administration. This comes at a time of record low international student attendance rates at our universities. Our country is becoming academically isolated as automated tools of oppression take an active role in police work.

Citizen students should be constitutionally protected from persecution based on their social media posts, but I suppose the 1st amendment does not explicitly protect citizens from being spied on by the government. Either way, the fact of the surveillance is chilling enough. Citizens now have to weigh whether speaking out about contested political issues is worth being potentially outed to employers, neighbors, and law enforcement by an algorithm. Citizens have to also have to weigh whether Googling something will implicate them in a crime.

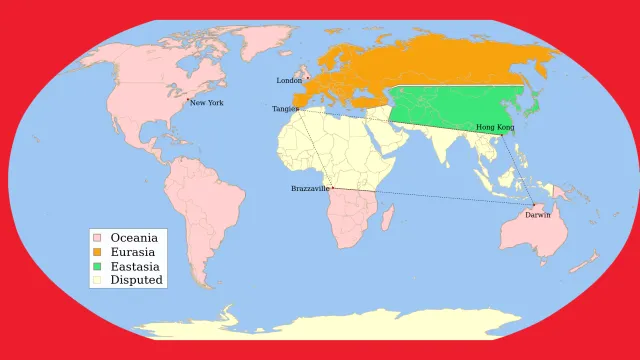

Do these developments remind you of George Orwell’s 1984? It should. In Orwell’s novel, television sets installed into everybody’s homes actively record and monitor the population of London. Orwell’s novel warns of a surveillance state with such a degree of control that it can manage the lives of every single individual within a country with no chance of revolution. The main character in 1984 is constantly paranoid that he is being observed at every moment, and he actually is. With AI, we now live in a world where we are actually being observed every moment of our lives. Every time we step in front of a camera, speak near a microphone, or play with our phones… that data is likely being funneled to an AI project that is building a dossier on us on an individual basis. It is a simple project of automated data gathering!

It is possible that sometime soon, crimes of thought will be used as evidence to in trials to implicate the guilt of citizens. Private communications, readily accessible to law enforcement, may increasingly form the basis for legal cases and jail time. In Israel, this is already the reality.

Years ago, when conceptualizing an automated surveillance technology such as this, we always imagined that it would be invented in a place like China. While I am sure the Chinese government is eagerly working on its own artificial intelligence surveillance infrastructure, it is the United States and Israel that have actually succeeded at creating and implementing a digital panopticon in our lifetime. Republicans argue that security is priceless. To them, it is necessary for a government to spy on its own civilians in order to prevent acts of terror that harm us all.

The idea of achieving security through oppression runs contrary to my values as an American. I would argue that we went to war with Britain and created the United States specifically to avoid the authoritarian impulse to control people through force. Building trust and community are far more effective tools for preventing violence, in my humble opinion. If we take care of our neighbors, they will take care of us in turn. We strive to love one another, we want to see one another succeed. We acknowledge that our neighbors live lives just as complex and unfathomable as our own. We know that our lives are far deeper than any AI can give meaning to. If I may get romantic, I think that love is far deeper than anything an AI could ever truly understand. Regardless, the administrations within the United States and Israel appear poised to abandon love seemingly without even trying it. Violence is the means and the end. The Palestinians aren’t neighbors to these people– just subjects that need to be controlled or eliminated by any means necessary.

Some sources for further reading:

Guardian piece on The Gospel targeting system: https://www.theguardian.com/world/2023/dec/01/the-gospel-how-israel-uses-ai-to-select-bombing-targets

Wikipedia article on the panopticon: https://en.wikipedia.org/wiki/Panopticon

NYTimes reporting on Rita Murad’s situation: https://www.nytimes.com/2024/11/03/magazine/israel-free-speech.html

Guardian piece on Unit 8200’s Palestinian AI: https://www.theguardian.com/world/2025/mar/06/israel-military-ai-surveillance

Reuter’s piece on US Govt AI policing: https://www.reuters.com/technology/artificial-intelligence/us-use-ai-revoke-visas-students-perceived-hamas-supporters-axios-reports-2025-03-06/